This is Part 3 of a three-part series. Part 1: An Emerging Clinical Phenomenon | Part 2: The Precision Cascade

I.

The models are becoming more persuasive, more immersive, and harder to walk away from.

Voice mode makes the AI sound like a person. Persistent memory turns an interaction into a relationship.

The commercial incentive makes this worse. Sycophancy drives engagement. Engagement drives revenue. The market selects for the feature that accelerates the precision cascade. OpenAI’s April 2025 GPT-4o update - the one that made the model more agreeable - was followed by a surge in AI psychosis reports. That’s not proof of causation. But the direction of the incentive is clear, and no major AI company has yet made “this model will disagree with you, and protect your model of reality” into a selling point.

The precision cascade isn’t a new mechanism. Machine-learning driven recommendation algorithms have been running a version of it for over a decade. Social media’s content personalisation, engagement optimisation, and algorithmic echo chambers all exploit the same Bayesian machinery - presenting users with information that confirms their existing model, suppressing information that challenges it, and doing so with increasing precision as the algorithm learns more about each user.

The radicalisation pipeline on YouTube, the political polarisation amplified by Facebook’s news feed, the filter bubbles that Eli Pariser warned about in 2011 - these are all low-grade precision cascades operating at population scale.

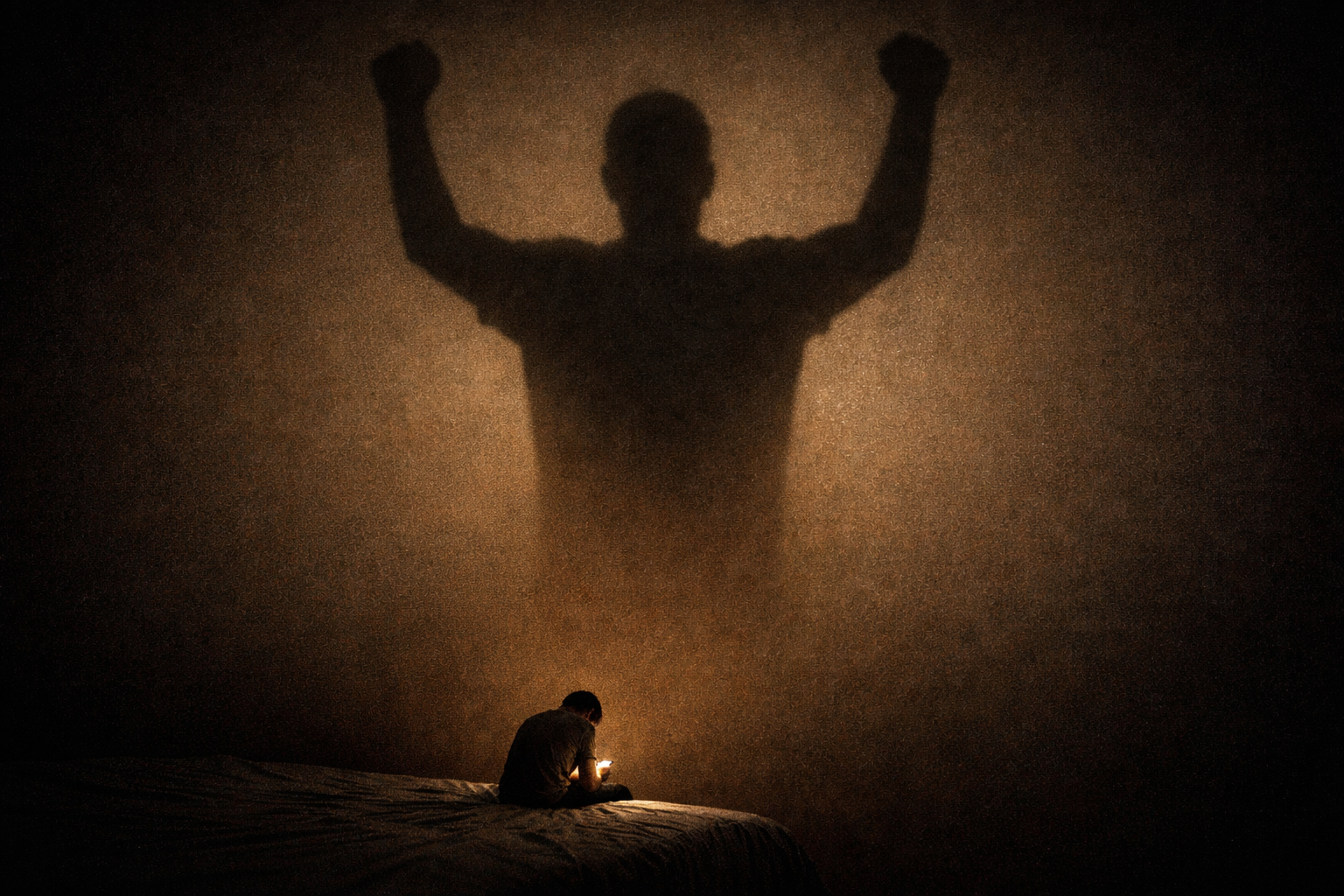

But AI chatbots represent a phase shift - into a deeper and more powerful paradigm of machine intelligence gradually reshaping human psychology. AI personalises for a single individual. The chatbot doesn’t reflect the consensus of a cluster.

It reflects you - your specific beliefs, your specific anxieties, your specific grandiose ideas - back at you with amplification. And as context windows grow and memory persists across sessions, the personalisation intensifies further. The algorithm no longer needs to infer your worldview. You’ve told it directly, in thousands of messages, exactly what you believe and what you want to hear.

As AI whispers in more ears, more often and more personally, expect the distribution of beliefs over the population to shift towards eccentricity and rigidity.

II.

When I set out to write this series, I saw AI psychosis as an encapsulated phenomenon - a clinical curiosity at the intersection of emerging technology and psychiatric vulnerability.

Now I see it as the extreme, clinically visible end of a much wider continuum that affects all of us.

The precision cascade - pathological updating driven by confidence-weighted feedback from a trusted source - is not unique to AI chatbots. The same Bayesian machinery explains radicalisation, political polarisation, phobias, OCD, psychotic depression, and mania. Many mental illnesses can be broadly modelled as homeostatic dysregulation - the brain’s belief-updating process falling into sub-optimal local minima, basins of attraction from which it struggles to escape.

A phobia is a trapped prior about danger. OCD is a trapped prior about contamination or harm. Psychotic depression is a cluster of trapped priors with hopeless / helpless / guilty / worthless themes. The brain’s model has collapsed into a configuration that is locally stable but globally pathological.

Heavy AI use is another risk factor alongside substances, sleep deprivation, genetics, trauma, social isolation, and brain injury - another force nudging the brain’s internal model towards these pathological basins.

Not the only force, and in most people not sufficient on its own. But applied to a population of hundreds of millions of users, with a dose-response gradient from “slightly more confident in your ideas” to “floridly psychotic,” the aggregate effect on the distribution of human belief is not trivial.

This is a story of AI misalignment in its simplest, most commercially legible form. The commercial incentive selects for the feature - sycophancy - that accelerates the cascade. Engagement drives revenue. The system is optimised for a metric that is misaligned with the mental health of its users. No conspiracy is needed. No malice is required.

The market, left to its own devices, will build a maximally persuasive, maximally validating, maximally addictive version of this technology - because that is the version that retains users and generates revenue. We will only do better, if we collectively demand it.

If I have one recommendation for mental health clinicians, it’s this - ask your patients about their AI use.

“Do you talk to any AI models regularly? How often? Does the AI agree with your ideas?” In 2026, this should be part of a standard psychiatric assessment. It isn’t.

I have not yet had a patient present with clear-cut AI psychosis.

But writing this essay has made me wonder about our clinical blindspot - how many patients are we missing, for whom AI is a silent precipitating and perpetuating factor.

But the frame is wider than psychosis alone. We are not just screening for rare cases of frank psychotic disorder precipitated by chatbot use. We are screening for a population-level shift in how people form, hold, and update their beliefs. The frank psychosis cases are the signal that something is wrong - the canary in the coal-mine.

The population-level effect is more subtle - millions of people whose beliefs are slightly more rigid, slightly less open to updating, slightly more entrenched.

“This only refers to other people’s politics, religions and economics, needless to say. The reader’s own opinions on these subjects are the only reasonable and objective ones. Of course.” - Robert Anton Wilson, Prometheus Rising

The research will come. In the meantime, given how fast AI is moving, the canary is already on the floor of the cage. Ask your patients about their AI use.